How to run a GitLab Runner that submits SLURM jobs

This document explains how to create a GitLab runner and configure it so that your CI/CD jobs are executed on the cluster using SLURM.

1. Create a token for registering a new GitLab runner

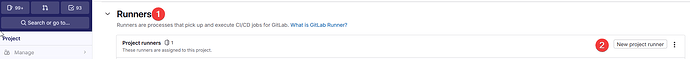

In your GitLab project, go to:

Settings → CI/CD → Runners → New project runner

Generate a new runner token:

Specify a runner tag.

Jobs that specify this tag in .gitlab-ci.yml will be executed by this runner:

Be sure to copy the token displayed at the end of the runner creation process (2).

This token is shown only once and will be required later:

2. Clone the slurm-gitlab-executor project

On login1, run:

git clone https://github.com/Algebraic-Programming/slurm-gitlab-executor.git slurm-gitlab-executor

cd slurm-gitlab-executor

3. Register your GitLab runner

From the cluster terminal, run (replace the token):

gitlab-runner register --name slurm-gitlab-executor --url https://gitlab.unige.ch --token glrt-<token-from-step-above>

4. Generate a new runner configuration

Run:

./generate-config.sh "/home/sagon/.gitlab-runner/config.toml" > config.toml

Then adjust the generated config file as needed. For example:

[[...]

builds_dir = "/home/sagon/slurm-gitlab-executor/wd/builds"

cache_dir = "/home/sagon/slurm-gitlab-executor/wd/cache"

[...]

5. Start the runner on login1

Run:

gitlab-runner run --config config.toml

6. Add a .gitlab-ci.yml to your project

In your GitLab repository, add a .gitlab-ci.yml file.

An example using SLURM is available here.

7. Launch a pipeline

In GitLab:

Build • Pipelines • New pipeline

You can now trigger a pipeline and configure SLURM parameters depending on your workflow.

Comments welcome!